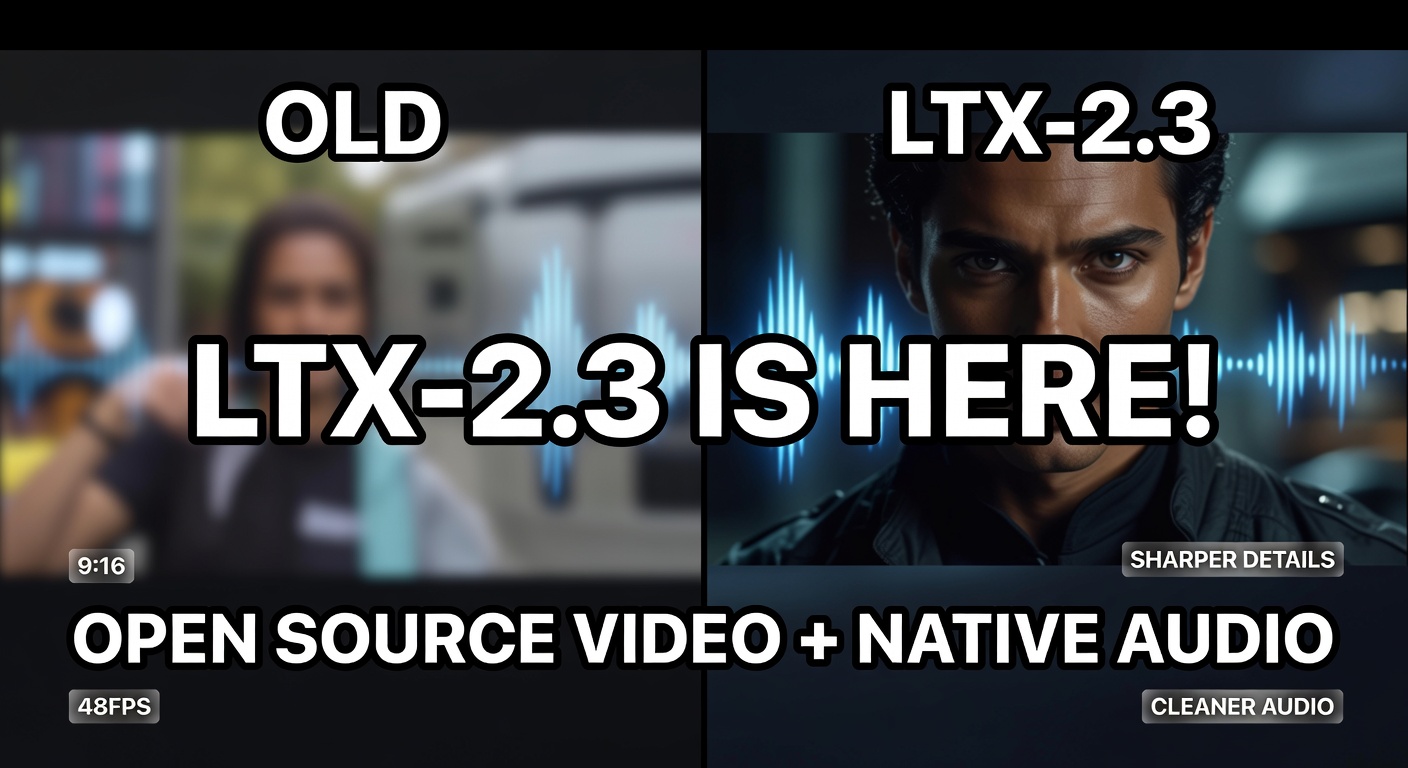

LTX-2.3 is one of the most meaningful open-source video releases this year because it pushes on the two things creators complain about most: soft detail and “messy” audio. The update focuses on sharper visuals, cleaner native sound, better prompt understanding, and new format and motion options—making it easier to generate clips that feel finished instead of experimental.

LTX-2.3 is available on GenAIntel, so you can start generating short videos immediately and compare results with other models in the same workspace.

Try LTX-2.3 on GenAIntel

Generate videos with native audio and compare outputs across multiple models in one place.

What is LTX-2.3?

LTX-2.3 is Lightricks’ latest open-source video generation model, built on a DiT-based architecture and released under an Apache 2.0 license (commercial use and fine-tuning permitted). It’s designed for practical local execution and emphasizes a unified audio-video approach, where sound can be generated alongside the visuals in one coherent process. Official pages: LTX-2.3 model page and the open-weights listing on Hugging Face.

What’s new in LTX-2.3 (the upgrades that matter)

LTX-2.3 is not a small tune-up. It introduces core system upgrades intended to improve sharpness, motion, audio reliability, and format flexibility.

- New VAE architecture for sharper fine details, textures, and facial features

- Cleaner native audio generated alongside video (more reliable, fewer artifacts)

- Better prompt understanding for more accurate scene composition

- Portrait 9:16 support in addition to landscape formats

- 24 FPS and 48 FPS options for smoother motion

- Last-frame interpolation for seamless transitions between shots

- LoRA fine-tuning support for custom styles and characters

The headline feature: better native audio that actually matches the scene

The most creator-visible improvement in LTX-2.3 is native audio quality. Earlier audio-video generators often produced random noise, inconsistent ambience, or sound that didn’t fit the visual timing. LTX-2.3 focuses on cleaner audio generation that better matches what’s happening: footsteps that land with movement, environmental ambience that fits the setting, and fewer unwanted “drops” or weird artifacts. The goal is a clip that feels cohesive without immediately requiring a separate sound pass.

Video examples with prompts you can try

These two prompts are designed to showcase what LTX-2.3 is known for: sharp detail, smooth motion, and audio that fits the moment.

Example 1: FPV drone spiral and atmospheric decay

Drone descent through the open oculus of a derelict Soviet-era radio telescope dish, spiraling downward into the rusted parabolic bowl where a lone botanist catalogs wildflowers growing through cracked concrete, her red jacket the only color against oxidized metal and grey sky, 24mm on a caged FPV drone, the descent creating a vertigo spiral, Tarkovsky Stalker zones of alien beauty reclaiming technology, science swallowed by natureExample 2: Ground-level tracking and hyper-detail slow motion

Snorkel lens ground-scraping tracking shot following a barefoot Ethiopian long-distance runner training on a dirt road at dawn, camera inches from the ground racing alongside, her feet kicking up red dust in slow motion at 240fps, Rift Valley landscape blurred in the background, the texture of earth and callused skin in hyper-detail, 40mm snorkel lens at ground height, Nike Running documentary meets Lubezki natural light, the poetry of human enduranceNew creative controls: portrait video, FPS options, and seamless transitions

Three additions are especially useful for real-world content creation:

- Portrait 9:16 support helps creators produce vertical content for Shorts/Reels/TikTok without awkward cropping.

- 24/48 FPS options give you a cleaner motion feel (48 FPS can reduce jitter in fast movement).

- Last-frame interpolation helps a shot end smoothly and makes it easier to stitch sequences together without a harsh cut.

What creators are noticing (early community impressions)

Early community posts generally report improved quality and detail, plus better consistency in motion when prompts are simple and intentional. Like any brand-new release, users still report occasional artifacts and variability depending on scene complexity. If you want to skim hands-on impressions, see: LTX 2.3 first impressions (community discussion).

Want a benchmark pack for LTX-2.3?

Save these prompts and build a repeatable test set to compare models across detail, motion, and audio coherence.

FAQ

Is LTX-2.3 open source and can it be used commercially?

Yes. LTX-2.3 is released with open weights and an Apache 2.0 license, which permits commercial use and fine-tuning. For the official listing, see LTX-2.3 on Hugging Face.

What’s the biggest improvement over LTX-2?

The most noticeable upgrades are sharper details (new VAE), cleaner native audio generation, and better prompt understanding, plus new format and motion options like portrait 9:16 and 24/48 FPS.

Does LTX-2.3 generate audio automatically?

LTX-2.3 is designed to generate audio alongside video when the platform and workflow enable it. To get the best results, describe ambience and sound effects explicitly in your prompt (for example: wind, footsteps, room tone).

Is portrait 9:16 supported?

Yes. LTX-2.3 adds native portrait 9:16 support in addition to landscape formats, which is particularly useful for vertical social content. For the official overview, see LTX-2.3 model page.

What does last-frame interpolation help with?

It helps clips end more smoothly and can make transitions between shots feel less abrupt—useful when you’re stitching multiple short clips into a longer sequence.